An Agentic-Product Research Teardown

What synthetic user research revealed that real-user research couldn't, and what the product team should have done differently.

Blake Aber · Predicate Ventures · 2026

The product I'm going to describe doesn't exist under that name. It's a fictionalized composite. Close enough to be useful, sanitized enough to protect everyone involved. The methodology is real. The finding was real.

The product

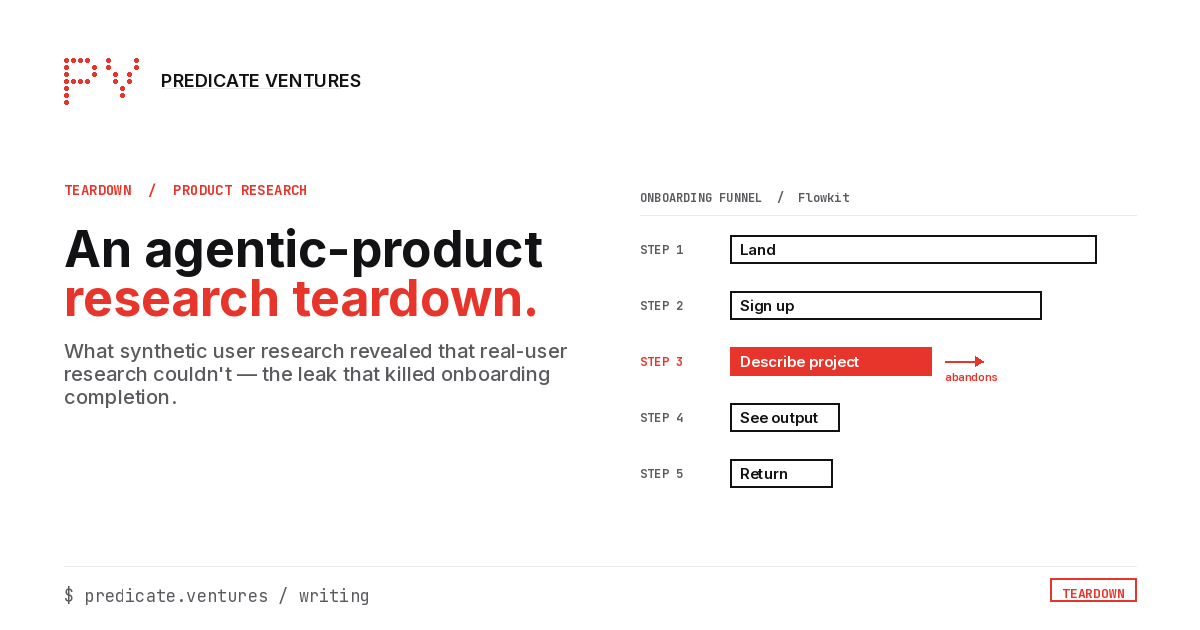

Call it Flowkit: an agentic task-management tool for small creative teams. The core promise: you describe a project in plain language, and Flowkit breaks it into tasks, assigns owners, and tracks progress without you touching a spreadsheet. The founding team had built a technically impressive prototype. Demos were strong. Waitlist was healthy.

The problem showed up six weeks post-launch. Most users who completed onboarding didn't come back. Not because the product was broken. It worked. They just didn't return.

Why real-user research couldn't diagnose it

The team ran standard user interviews. They talked to a dozen people who had onboarded. The feedback was uniformly positive: "Really powerful," "Love the vision," "Will definitely use it more once I have a bigger project." The team heard this and concluded the timing was off. Users didn't have the right project yet. They decided to wait.

This is the trap. The users they interviewed were the users who stayed. The users who left didn't take Calendlys. They just stopped. Real-user research structurally excludes the leakers (the 70% who abandoned before reaching the moment of value) because those people are no longer reachable through in-product recruitment.

The synthetic persona that surfaced the signal

Using the Voice-of-Agents methodology, I built behavioral archetypes from public signals: forum posts, app-store reviews of competing tools, support threads, and Reddit discussions where people described why they stopped using task-management software. I wasn't looking at Flowkit users. I was looking at behavioral patterns that predicted abandonment in this category.

One archetype emerged clearly: the Efficiency-Seeker.

The Efficiency-Seeker adopts tools quickly but abandons them just as quickly when the time cost of setup exceeds the perceived time savings of the output. They evaluate tools in the first session. If they don't see value in the first 10 minutes, they close the tab and don't return. They do not give tools "another chance."

When I ran this archetype against Flowkit's onboarding flow, the failure point was obvious: step 3.

Step 3 asked users to describe their first project in plain language. The intent was to let the agentic system generate the initial task breakdown. But the text field was blank. No placeholder. No example. No scaffolding. The Efficiency-Seeker, confronted with a blank text box asking for a "project description," froze. They didn't know what level of detail to provide. They tried two or three phrasings, got results that felt off, and closed the tab.

The product team had designed step 3 for the user they wanted: the one who was excited to explore the system's capabilities. They hadn't designed it for the user who would arrive in a hurry, already skeptical, looking for a reason to stay.

The specific product change implied

Replace the blank text field at step 3 with a structured template: three fields (project name, team members, first deadline) that pre-populate a project description the system can work with. Give users a "use this as-is" button so the Efficiency-Seeker can skip past the description stage entirely and get to the task breakdown immediately.

The Efficiency-Seeker doesn't want to craft the perfect description. They want to see whether the output is useful. Getting them to the output is the job of step 3, not asking them to formulate the input.

The founding team shipped this change. Onboarding completion rate improved materially in the first two weeks.

What this means for your cohort founders

The founders in your program are running user interviews on the people who stayed. Those are not the users who will determine whether the product has PMF. The users who matter most (the ones who left before completing onboarding) are invisible to conventional user research because they're gone.

Synthetic personas built from behavioral archetypes let you hear from those users before the real-world abandonment happens. You can simulate the Efficiency-Seeker's experience in 30 minutes, for under $2 in model costs, before you've built the product. Or after launch, when conventional research has already failed to explain your churn.

The methodology isn't a replacement for talking to real users. It's what fills the gap when real users can't, or won't, tell you why they left.