Harness Engineering

Why production AI is 90% harness and 10% model. And what most pilots miss.

Blake Aber · Predicate Ventures · 2026

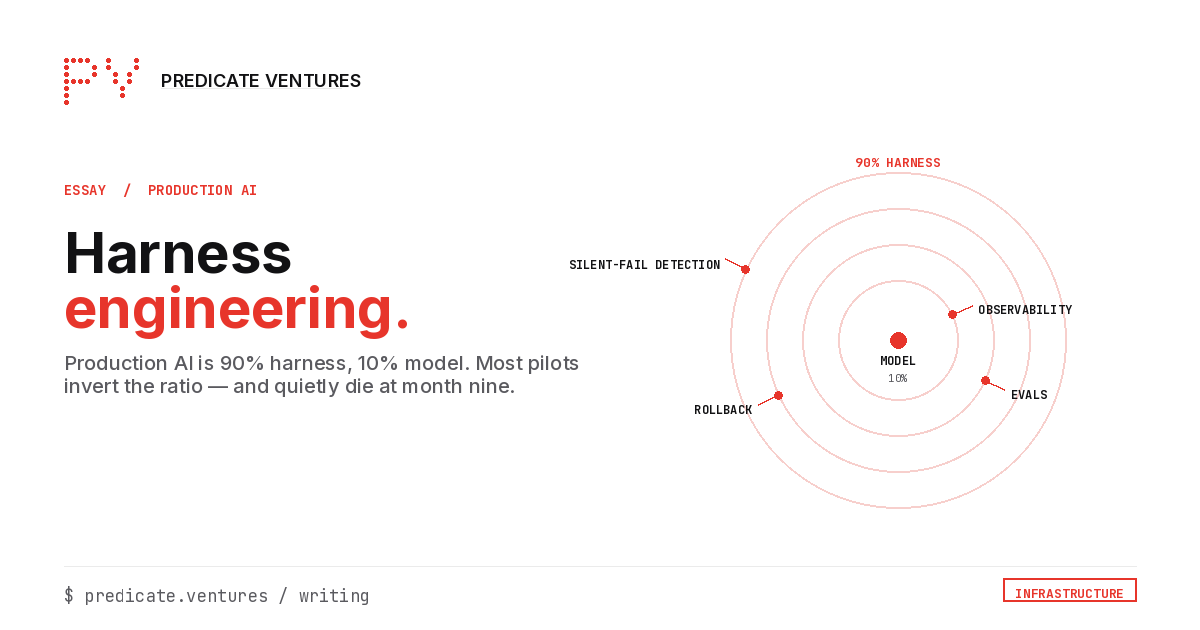

The gap between an AI demo that lands in a steering-committee deck and an AI system that stays in production isn't the model. It's the harness: the standing infrastructure around the model that makes it observable, auditable, and recoverable when things go sideways. Roughly 90% of what determines whether a pilot ships is harness work. About 10% is model capability. Most enterprise AI programs invert this ratio in budgeting, staffing, and attention.

This is why pilots that pass validation quietly die in production at month nine. The model still works at month nine. The harness was never built.

The four primitives

Observability. Not a dashboard. The standing infrastructure that runs the deployed model against named scenarios continuously, tracks output drift over time, and flags when the input distribution has shifted enough that the validation set no longer covers production reality. Observability is what turns "the model passed at week zero" into "the model is still passing." Without it, the model is a snapshot, not a system.

Evals. Not the test set used at training time. The eval harness that runs in production, against real workloads, on a cadence that catches regressions before they accumulate. Evals must be cheap enough to run continuously, structured enough to detect specific failure modes, and owned by someone. Not "the platform team." A specific named person whose job it is to look at the dashboard. Without ownership, evals decay.

Rollback. Not the model-version manifest. The operational mechanism that takes a deployed model out of production within a defined window (minutes, not weeks) when something is going wrong. Rollback includes the human authority (who can pull the model), the technical mechanism (how the model is pulled), the fallback path (what happens to in-flight requests), and the post-rollback audit (what's reconstructed afterward). Most programs treat rollback as something they'll figure out if needed. The programs that ship treat it as the first thing they design.

Silent-failure detection. The hardest of the four. Models fail in two ways: catastrophically (everyone notices) and silently (the model produces plausible-looking output that's wrong). Silent failure is strictly worse than a crash. Users learn to trust the model on the 90% it gets right and never notice the 10% it gets wrong until the audit. Silent-failure detection requires instrumenting the boundary between the model and the downstream consumer: what the model said, what the user did with it, whether the action's outcome matched the model's confidence. Without this instrumentation, the model can be wrong for months before anyone notices.

The month-nine pattern

Most enterprise AI initiatives quietly die at month nine. Not at validation. Validation passed at week zero. Month nine is when:

- The input distribution has shifted (seasonal, organizational, regulatory) and observability wasn't tracking it

- Evals weren't running on real workloads, just on a frozen validation set

- An operator misuse pattern emerged that nobody caught because silent-failure detection wasn't deployed

- A compliance review pulled the audit log and found the trail was insufficient

- The technical owner who built the pilot moved teams and rollback authority became unclear

The pilot didn't fail because the model got worse. It failed because the harness around the model was never built, and the model's environment changed.

This is the failure mode that doesn't show up in vendor demos because vendor demos run against the static dataset the model was tuned on. It doesn't show up in pilot reports because pilots end before month nine. It shows up in the steering-committee meeting at month ten where someone asks why the metrics are flat and discovers the model has been silently wrong for a quarter.

What "harness engineering as design input" looks like operationally

The programs that ship at scale make four decisions before the model is selected:

- Observability ownership and cadence. A specific person owns the standing eval. Not a team. A person, named in a decision-record, with a calendar reminder.

- Audit-log scope and retention. The audit boundary is scoped to the decision granularity that satisfies regulatory and operational review, not just to "everything the model did." Designed before deployment, not after the first incident.

- Rollback authority and mechanism. A specific person can pull the model in minutes. The mechanism is tested before deployment, tabletop-exercise style. The fallback path is named.

- Silent-failure detection at the boundary. Instrumentation for what the model said, what the user did, what the outcome was. Designed into the integration, not retrofitted.

These four decisions aren't engineering nice-to-haves. They're the difference between a pilot that ships and a pilot that quietly dies at month nine.

Why this matters now

Two pressures are converging. First, model capability is plateauing across vendors. The differentiator at production scale shifts from which model you select to which harness you've built. The orgs with harness discipline can deploy any model safely. The orgs without it can't deploy the best model safely. Second, regulatory expectations are tightening. Auditors are asking design-input questions, not review-end questions. Programs without explicit harness artifacts are going to discover this expensively.

The harness engineering gap is the structural advantage in production AI for the next 24 months. The orgs investing in real observability, evals, rollback, and silent-failure detection now will be running at a different operational tempo by 2027. The orgs treating those primitives as post-deployment retrofits will be running reactive rebuilds.