The IC Trap

Why assigning AI transformation to your best junior engineer is a rational decision that almost always fails.

Blake Aber · Predicate Ventures · 2026

Let me steelman the decision first, because it genuinely looks smart.

A portfolio company has a talented young ML engineer. She's excited about the AI initiative. She understands the technical stack. She has time, or can make time. She's already an employee, so there's no recruiting cost. And she's cheap relative to a senior outside hire.

So the GP suggests: put her in charge of the AI program. She builds the prototype. It's impressive. Everyone agrees it could ship in 90 days.

Eighteen months later, the prototype is still not in production. The engineer is still in charge. The company is still "almost ready to ship."

This is the IC trap.

What the IC can't do

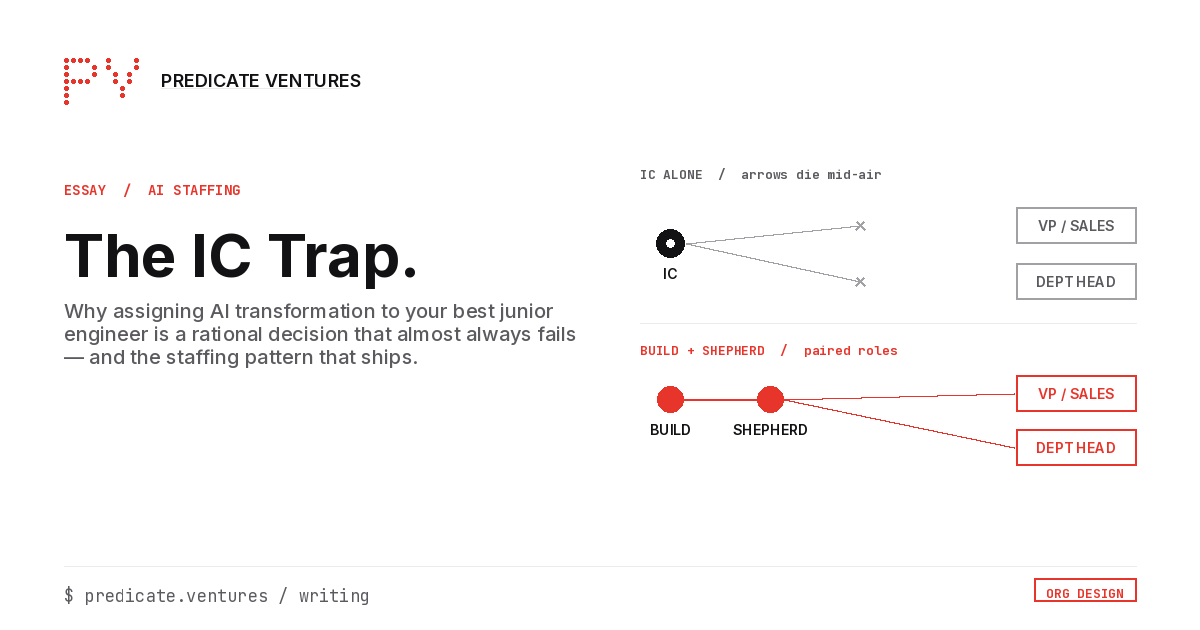

The individual contributor can build. What she can't do, not because she's incompetent but because of her organizational position, is shepherd.

Shepherding an AI program into production requires walking into a VP's office and saying: "This workflow needs to change, and I need you to change it before we can ship." It requires telling a department head that the process they've run for seven years is the reason the AI can't work. It requires the authority to reject a scope change from a senior stakeholder, even when that stakeholder outranks you.

An IC doesn't have this authority. She can flag the organizational blockers. She can write them up in a Confluence doc. She can escalate them to her manager. But she cannot resolve them herself. The blockers sit in the backlog, and the prototype sits in development.

The failure mode looks like this: excellent technical work, accumulating organizational debt, a growing list of "dependencies on other teams" that never resolve. The prototype is impressive at month three. It's still impressive at month eighteen. It's never in production.

A composite example

At one portco I've seen this pattern, the Head of AI was a brilliant ML engineer in his mid-twenties who'd shipped four compelling demos in his first six months in the role. The VP of Sales had never seen any of them. Not because the demos were bad. Because no one had built the bridge between "here's what the AI can do" and "here's how the sales team's workflow needs to change to use it." The engineer didn't have the organizational authority to build that bridge. So it never got built.

The demos got better. The production deployment didn't happen.

The staffing pattern that works

The person who builds the AI and the person who shepherds the adoption are almost never the same person. These are different skills, and more importantly, they require different organizational positions.

Build is an IC job: machine learning, engineering, evaluation harness, observability infrastructure. Find your most technically capable person.

Shepherd is a senior practitioner job: workflow change management, stakeholder alignment, specification clarity, production ownership. Find someone with the organizational authority to get a workflow changed, and pair them with the builder.

At portcos under 100 people, the shepherd is often a fractional engagement rather than a full-time hire. The domain expertise is already in the company. What's missing is the person who can move the org around the AI program rather than waiting for the org to accommodate it.