AI Governance Infrastructure for Law Firms: What Policy Can't Catch

Where AI incidents in legal actually come from, and what infrastructure (not policy) prevents them.

Blake Aber · Predicate Ventures · 2026

The policy layer is table stakes. It isn't enough.

When Sullivan & Cromwell apologized to a federal bankruptcy judge in April 2026 for AI hallucinations in a court filing, the firm's apology letter said the firm had policies. Safeguards existed. Those safeguards weren't followed.

That framing, "the safeguard existed but wasn't followed," is how a policy failure gets described. But something more specific happened: a hallucination was generated, wasn't caught at generation time, wasn't caught at review time, and made it into a document that got filed.

That's not a policy problem. It's an infrastructure problem.

The distinction matters because it determines what you build next.

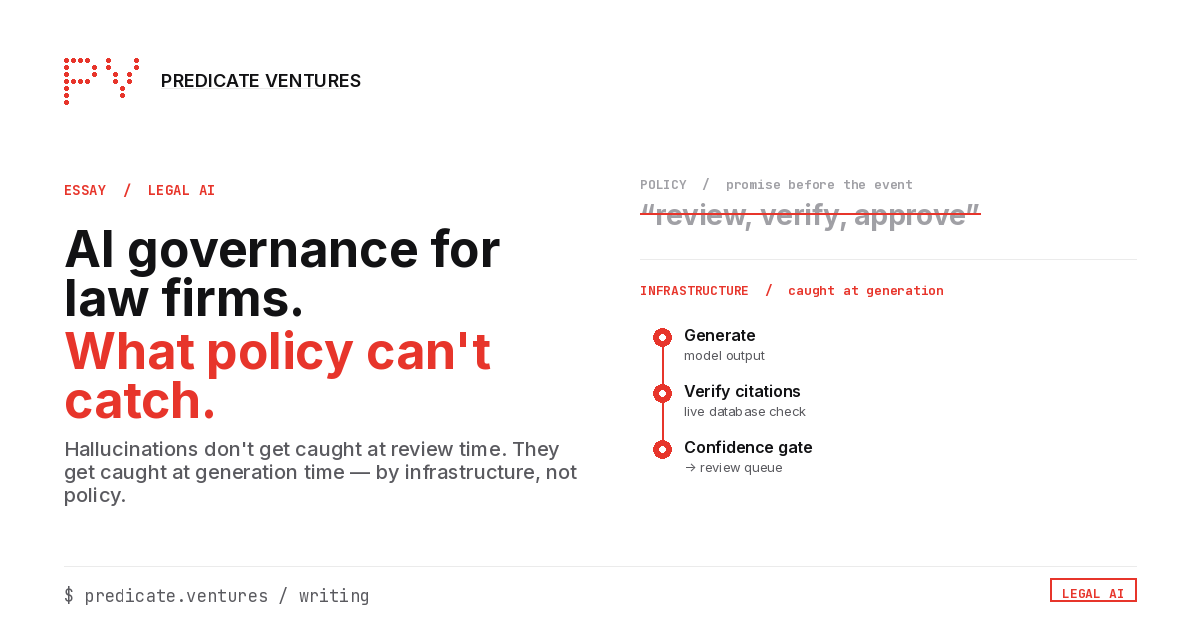

What policy can and can't do

Policy is a promise made before the event. A well-written AI acceptable-use policy says: don't submit output you haven't reviewed; verify citations before they go into a document; a human must approve anything client-facing.

This works when the human executing the task has time, attention, and professional accountability in that moment. It fails when one of those is missing: a deadline, a junior practitioner, a late-night run.

Policy can't:

- Verify a citation at the point of generation

- Flag output that has drifted below a confidence threshold

- Stop hallucinated text from appearing in a draft before a human ever sees it

- Detect when the underlying model is behaving differently than it was in testing

Policy can:

- Set the expectation that review must happen

- Define who bears accountability when it doesn't

- Create a paper trail after the fact

One of those is prevention. The other is compliance.

What infrastructure does instead

An AI harness layer operates at the point of generation, not at the point of review. For legal work specifically, three components matter most:

1. Citation verification at generation time. Not a prompt instruction ("always verify citations"). A pipeline step. Every legal source referenced by the AI system is verified against a live database before the output is surfaced to the user. If the citation doesn't resolve, the output is flagged before it reaches a document. This is the component that would have caught the S&C error before it left the system.

2. Confidence thresholds with explicit escalation. Most LLMs don't communicate confidence levels by default. They output text with the same fluency whether the underlying generation is well-supported or a hallucination. A governed system adds a confidence scoring layer and an explicit routing rule. Output below threshold X goes to a review queue before it's surfaced. Output above threshold is still human-reviewed, but doesn't go through a secondary check. The threshold becomes an operationalized risk tolerance, not a general instruction to "be careful."

3. Eval drift monitoring. Models change. Vendor systems update, fine-tune, and swap underlying models without always surfacing clear versioning to their enterprise customers. A production AI system that performed well in testing may behave differently six months later. Without a monitoring layer that tracks output quality over time, that drift is invisible until it produces an incident. Eval monitoring is a production-phase activity, not a testing-phase one.

The three risks firms aren't accounting for

1. Policy is visible; harness is invisible. Boards, legal ops committees, and general counsel can review a policy document. They can't easily audit whether a citation-verification pipeline exists and is functioning. This creates pressure to solve the visible problem (produce a policy) at the expense of the invisible one (build the infrastructure). The S&C incident is the outcome when that trade-off is made.

2. The tool vendor is not responsible for your harness. Harvey, CoCounsel, and Lexis+ AI are tools. They don't know what your workflows require, what your confidence thresholds should be, or how their system integrates with your document management and review processes. The harness layer (the constraints and monitoring that sit between the tool's output and your firm's document) is your responsibility to design and build.

3. The governance hire alone doesn't solve it. Hiring a Chief AI Officer is the right move. But a CIAO with a policy mandate and no harness build is one deadline away from the same outcome as no CIAO. The value of the hire depends on whether the first deliverable is a policy framework or an infrastructure spec.

What to look for in any AI deployment evaluation

Before a new AI capability goes into production at your firm, ask:

- Where in the workflow does the system's output get verified, and what is the mechanism of verification? Is it human review only, or is there a pipeline step?

- What happens when the model produces output it shouldn't? Is there a flagging layer, or does it surface to the user regardless?

- How will you know if model behavior drifts from what you observed in testing? What's the monitoring mechanism?

- Who owns the harness specification: the vendor, your team, or no one?

These aren't vendor-evaluation questions. They're infrastructure questions. The firms that ask them before deployment are the ones that won't be writing apology letters.