The next chapter org psychology was always going to write

There's a bold claim making the rounds in AI circles: that organizational psychology as a discipline is being fundamentally reshaped by multi-agent systems.

I think the claim is correct. But not for the reasons most people assume.

The standard version of this argument goes something like: "AI agents are so powerful that they'll change everything about how we work, including the science of how we study work." That framing is both overconfident about the technology and underconfident about the discipline. It treats organizational psychology as a passive recipient of technological disruption rather than what it actually is — a field that has always evolved in direct response to new coordination architectures.

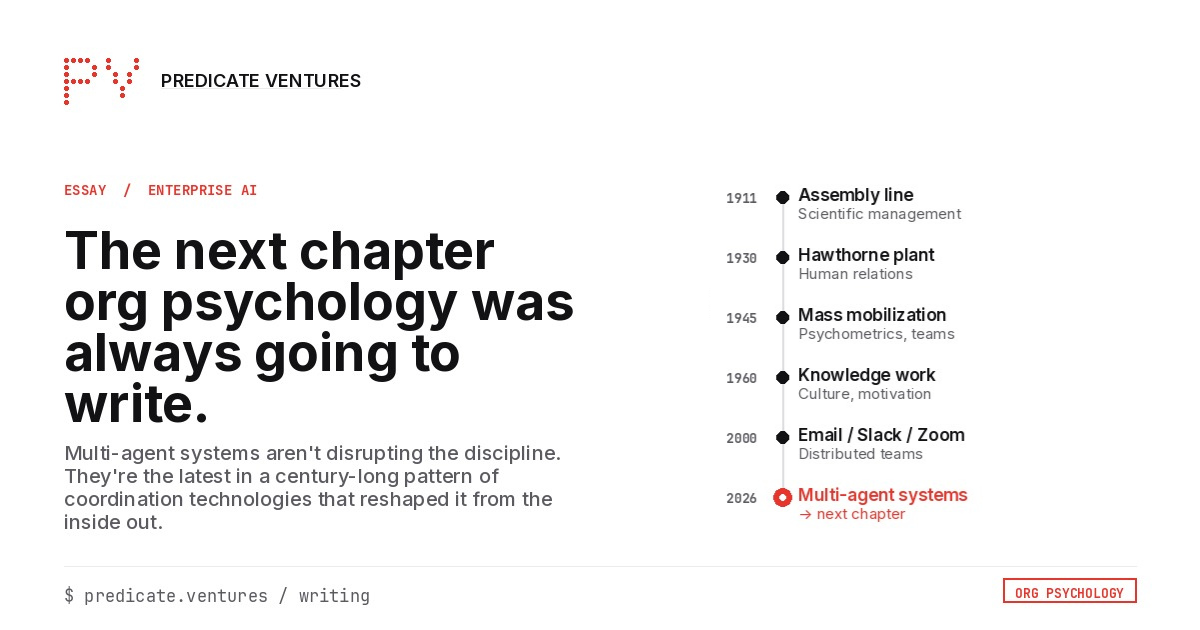

Once you see that pattern, multi-agent systems stop looking like a speculative leap and start looking like the obvious next chapter.

The pattern: coordination technology in, disciplinary evolution out

Organizational psychology didn't emerge from abstract theorizing. It emerged from the factory floor.

Frederick Taylor's scientific management in the early 1900s wasn't just an efficiency framework — it was the first systematic attempt to optimize the fit between humans and the coordination technology of the industrial age: the assembly line. The resistance it generated, the social dynamics it created, the questions it raised about worker autonomy and meaning — those became the raw material for an entirely new field.

The Hawthorne Studies in the 1920s and 1930s arose because Taylorism couldn't explain what was actually happening when humans coordinated at scale. Elton Mayo discovered that informal relationships, group norms, and emotional dynamics — things that had nothing to do with time-and-motion studies — were driving productivity as much as process design. The human relations movement was born.

World War II forced another evolution. Suddenly, the coordination challenge wasn't factory throughput — it was placing millions of people into the right roles across a technologically complex military apparatus. New psychometric tools, team development methods, and performance systems emerged not from theory but from operational necessity.

The shift from industrial to knowledge work in the mid-20th century spawned yet another wave: organizational culture, psychological safety, team dynamics, intrinsic motivation. Peter Drucker didn't invent knowledge work and then wait for psychologists to study it. The coordination architecture changed, and the discipline followed.

Email. The cubicle. Distributed teams. Slack. Zoom. Each coordination technology didn't just change work — it changed what organizational psychologists needed to study, measure, and design for.

The pattern is remarkably consistent: new coordination technology → new social dynamics → new organizational psychology.

Why multi-agent systems are the next inflection

Every previous coordination technology changed how humans coordinated with other humans. Email changed asynchronous communication. Slack changed real-time collaboration. Zoom changed distributed presence. But the agent on the other end was always a person.

Multi-agent systems introduce something categorically new: coordination between humans and non-human agents, and between non-human agents themselves.

This isn't theoretical. Anthropic's 2026 Agentic Coding Trends Report describes multi-agent systems replacing single-agent workflows, with orchestrator agents delegating subtasks to specialized agents working in parallel — what they call less "chatbot helper," more "AI scrum team." Their Opus 4.6 model introduces "agent teams" where multiple AI instances work in parallel, with a lead session coordinating work, assigning tasks, and synthesizing results while team members communicate directly.

The infrastructure being built for these systems reads like an org design textbook: role definition, task allocation, permissions, state management, handoff protocols, error recovery. Anthropic's Managed Agents platform — already in production at Notion, Asana, Sentry, and Rakuten — handles secure sandboxing, long-running session management, multi-agent coordination, and scoped permissions.

These are organizational coordination primitives. They just happen to be running on silicon instead of being mediated through Slack channels.

What the experts are actually saying (and why the disagreements strengthen the case)

The most interesting thing about the current discourse is that even the people who disagree about the technology agree about the organizational implications.

Ethan Mollick, perhaps the most empirically grounded observer of AI in organizations, has been explicit: in a Deloitte interview, he stated flatly that the transition from AI as a tool to AI as a workforce "is not actually a technology problem. It's a process problem." At Valence's 2026 AI & Workforce Summit, he argued that HR — not IT — is the function best positioned to lead organizations through the agentic transformation. That's an organizational psychology claim, not a computer science claim.

His research has surfaced a phenomenon that belongs in every I-O psychology textbook: the most advanced AI users inside organizations are often working in secret, hiding their usage because 2023-era policies create fear of punishment rather than enablement. That's a psychological safety problem. Amy Edmondson would recognize it immediately.

Adam Grant has been updating his priors in public — a scientist's highest virtue. At Indeed FutureWorks 2025, he admitted he'd been confident that AI wouldn't catch up to human empathy. Then the evidence showed that people actually felt more supported chatting with AI than with humans in certain contexts. He's now actively researching "cognitive debt" — how accelerated AI adoption may reduce human capacity over time. These are organizational psychology questions that didn't exist three years ago.

Gary Marcus provides the essential counterargument — and paradoxically strengthens the case. His prediction that AI agents would be "endlessly hyped but far from reliable, except possibly in very narrow use cases" has been largely validated. RAND research found that over 80% of AI projects fail at roughly twice the rate of non-AI IT projects.

But here's the thing: Marcus's critique is about technical reliability, not about whether the organizational questions are real. In fact, his argument makes the organizational psychology case stronger. If you're deploying imperfect agents into human workflows — and organizations are doing this at scale — you need more organizational science, not less. You need better frameworks for trust calibration, human oversight design, error recovery workflows, and the psychology of appropriate delegation to imperfect systems.

Dario Amodei has been perhaps the most direct about the structural implications. In his January 2026 essay "The Adolescence of Technology," he warned that AI could displace half of all entry-level white-collar jobs within one to five years and raised the prospect of wealth concentration exceeding the Gilded Age. These aren't technical predictions. They're organizational and societal restructuring predictions that demand psychological science to navigate.

The specific questions that break existing models

Multi-agent systems don't vaguely "affect" organizational psychology. They break specific existing models and demand new ones:

Trust and delegation. The canonical models of organizational trust — Mayer, Davis, and Schoorman's framework of ability, benevolence, and integrity — were designed for human-to-human relationships. How do you calibrate trust in an agent that's 95% reliable on routine tasks but fails unpredictably on edge cases? Aviation psychology has studied "automation complacency" for decades. Now every knowledge worker faces the same challenge. Mollick captured this perfectly: if we don't think hard about why we're doing work, "we are all going to drown in a wave of AI content."

Team composition. Joan Woodward's contingency theory showed that optimal organizational structure depends on production technology — small-batch versus mass production versus continuous process. Multi-agent systems create a new category entirely: teams where some members are human, some are specialized AI agents, and the coordination architecture is software-defined and reconfigurable in real time. The group dynamics literature has no model for this.

Performance attribution. Anthropic's data shows developers use AI in roughly 60% of their work but can fully delegate only 0–20% of tasks. When a human architect directs three agents that produce the code, who "performed"? Traditional performance management assumes you can attribute outcomes to individuals. That assumption is breaking.

Identity and meaning. Amodei's essay raises the deepest question: what happens to human purpose in a world where AI exceeds human capabilities across virtually all domains? This is an existential challenge for a discipline built on the premise that work is a site of human meaning-making.

The honest framing

Am I saying the transformation is complete, or that current multi-agent systems are mature? No. The failure rates are real. The reliability gaps are real. Many organizations are still running 2023-era AI governance playbooks against 2026-era capabilities.

But the parallel to previous transitions holds. Scientific management didn't arrive fully formed at the Bethlehem Steel Works. The human relations movement didn't spring complete from the Hawthorne plant. The discipline evolved because new coordination technologies forced new questions that existing frameworks couldn't answer.

Multi-agent systems are doing precisely this — at a velocity and scale that exceeds any previous coordination technology shift.

The discipline isn't being reshaped because the technology is perfect. It's being reshaped because the questions have changed.

And organizational psychology has always followed the questions.