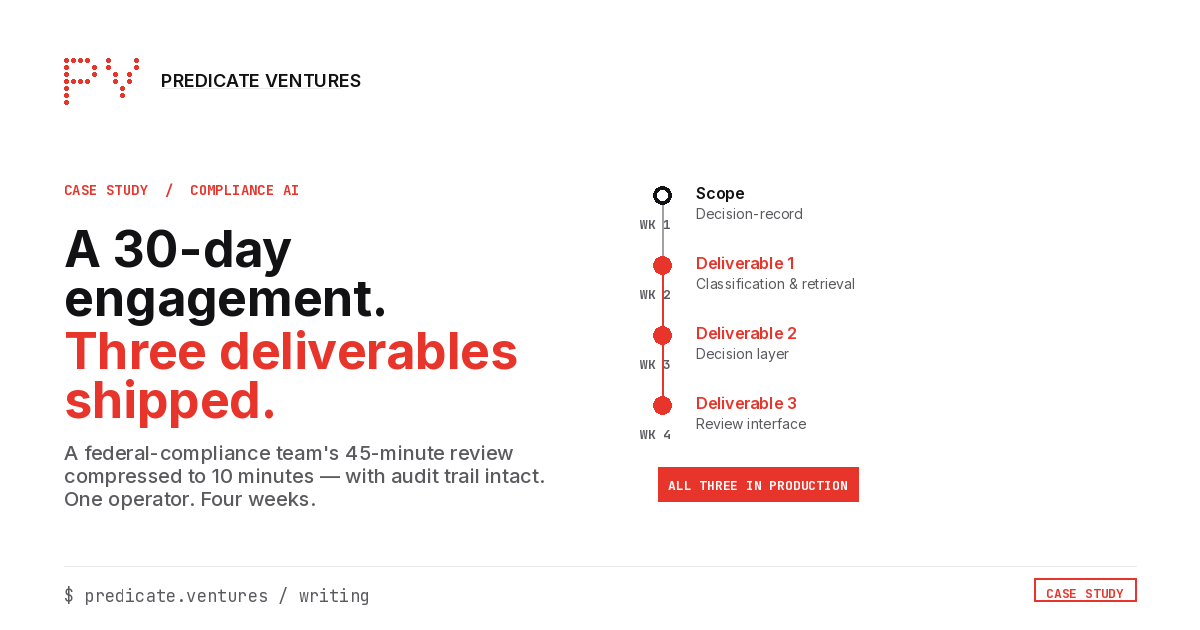

A 30-Day Engagement: Federal Compliance Workflow Automation

A sanitized case writeup of a single-operator AI engagement that shipped three deliverables in one week.

Blake Aber · Predicate Ventures · 2026

This is one example of what 30-day shippable AI looks like in practice. The client was a federal-compliance-adjacent software company. Names and specifics are sanitized. The shape of the work is what matters.

The situation

The company had a compliance-decisioning workflow that sat at the heart of their product: when a customer submitted an inquiry, a compliance team had to review the inquiry against a structured set of regulations and produce a decision with supporting evidence. The work was high-stakes (regulatory exposure if wrong), high-volume (hundreds of inquiries per week), and human-bottlenecked (each inquiry took an analyst 30-60 minutes to review). The team was small. The backlog was growing.

The CEO had been pitched a 6-month "AI transformation" engagement by an enterprise consultancy. The price was a six-figure monthly retainer with a 12-month minimum. The timeline didn't match the urgency, the price didn't match the headcount, and the scope didn't match what was actually broken.

What they needed was someone who could pick the right narrow piece, ship something useful in a defined window, and not require a 90-day discovery phase first.

The engagement

One operator (me), four working weeks. Total scope: three concrete AI-enabled deliverables that took the analyst's role from "spend 45 minutes per inquiry" to "spend 10 minutes per inquiry, with the rest done by AI and reviewed by the human." The arithmetic: a 4x throughput improvement on the bottleneck workflow, with audit-grade traceability preserved for regulatory review.

The four weeks broke down roughly as follows. Week 1: scoping, decision-record on what gets built and what gets cut, mapping the existing compliance workflow against the AI's capability and limits. Week 2: ship the classification-and-retrieval engine that maps inquiries to relevant regulatory evidence. Week 3: ship the deterministic decision layer that produces a recommended decision with full audit trail. Week 4: ship the analyst-facing interface that surfaces the AI's output with the supporting evidence in one place, ready for the analyst's 10-minute review.

What got shipped

Three artifacts. All three live in production.

Deliverable 1: classification-and-retrieval engine. The engine takes a customer inquiry, identifies the relevant regulatory framework, and pulls the specific evidence artifacts that bear on the decision. It presents them to the AI decisioning layer as structured context. The analyst used to do all of this by hand: opening regulatory PDFs, searching for the right paragraphs, copy-pasting evidence into a working document. The engine compresses 20-30 minutes of pre-decision work into seconds.

Deliverable 2: deterministic decision layer. Given the inquiry plus the retrieved evidence, the decision layer produces a recommended decision with full traceability. The output records what evidence was considered, which regulatory clause was applied, what the recommendation is, and a confidence signal indicating how clear-cut the case is. The decision layer is not an LLM that "thinks about it." It's a structured harness that combines models (where they fit), rules (where they fit), and evidence retrieval (where the question is fundamentally a documentation question). The output is auditable end-to-end.

Deliverable 3: analyst-facing review interface. The analyst sees the AI's recommended decision, its full evidence trail, and its confidence signal in one screen. The job shifts from researcher-and-decider to reviewer-and-validator. The 45-minute task compresses to a 10-minute task. The audit log captures both the AI's recommendation and the analyst's final call.

Each deliverable shipped in roughly one calendar week. None of the three required a six-month engagement, a discovery phase, or an enterprise platform purchase.

What it cost

Roughly the cost of one month of a senior contract-engineer's time. Not enterprise-engagement money. Not vendor-software-license money. A specific scope, a specific timeline, a specific price. If it hadn't worked in 30 days, the client would have paid for one month of a senior person and owned the artifacts produced. They could have hired someone else to extend the work, shelved it, or run with what existed.

The client didn't shelve it. The deliverables are still in production. The team has continued the work in-house from there.

What this engagement is not

It is not a "transformation." It didn't replace the analyst team. It didn't restructure the company's operating model. It didn't require a procurement cycle, a steering committee, or a press release. It was three workflow improvements scoped narrowly enough to ship in a month, against a workflow chosen specifically because it scored cleanly on the 30-day shippable criteria (one named workflow, 5+ hours/week of named-analyst time, repeatable shape, measurable output, human-in-the-loop review).

The engagement model is what enterprise AI gets wrong about small-and-mid-sized businesses. You don't need a transformation. You need someone to pick the right narrow piece and ship it.

What worked, what didn't

What worked: scoping discipline. The single biggest reason 30-day engagements fail is that scope creeps in week 2. Holding the line on "three deliverables, defined at week 1, shipped one per week" was the difference between landing in 30 days and landing in 90.

What didn't work as cleanly: the original Week 1 scope had four deliverables. The fourth was a customer-facing self-service portal that would have let customers triage their own inquiries before submitting. We cut it at end of Week 1 because building it would have required UI design work that wasn't in scope, plus a customer-data-handling layer that needed dedicated security review. The right call was to ship the three deliverables and propose the fourth as a separate engagement. The client agreed. The portal became a follow-on conversation that happened on its own merits.

Why this matters for your business

If you have a workflow that scores cleanly on the 30-Day Shippable AI Scorecard, the engagement model that worked for the federal-compliance company can work for you. The pattern is industry-agnostic. It has run for me at Fortune-500 healthcare scale (CVS Aetna/Signify integration), at growth-stage marketplace scale (Whop fraud detection and content integrity), and at single-operator federal-compliance scale (this engagement). The same scoping discipline applies. The same pricing model applies. The same 30-day envelope applies.

The hard part is picking the right workflow. The Scorecard helps with that. The engagement that follows is the easy part.